Augmented Ubiquity: The First 4D Gaussian Splat Captured with a botspot Scanner

We have written before about 4D Gaussian Splatting as an emerging technology with significant potential for dynamic human capture. Until recently, however, it remained something we discussed in theory. That changed when the team at KreativInstitut.OWL used our NEO full-body scanner to produce the first 4D Gaussian Splat ever captured with one of our systems, as part of their immersive VR music composition Augmented Ubiquity.

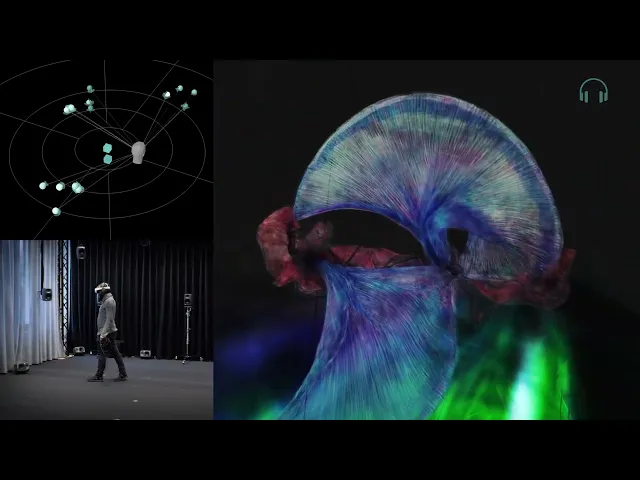

The result is a fully volumetric, photorealistic capture of a live dance performance that can be explored from any position in three-dimensional space and rendered in real time. You can see it for yourself below, and watch the team explain how they built it in their case film.

What 4D Gaussian Splatting Actually Does

To understand why this project is technically significant, it helps to know what separates 4D Gaussian Splatting from standard photogrammetric scanning. In a conventional workflow, multiple images of a static subject are used to reconstruct a three-dimensional mesh with texture. This is what the NEO was designed to do, and it does it with high accuracy. The limitation is the word "static": any movement during capture breaks the reconstruction.

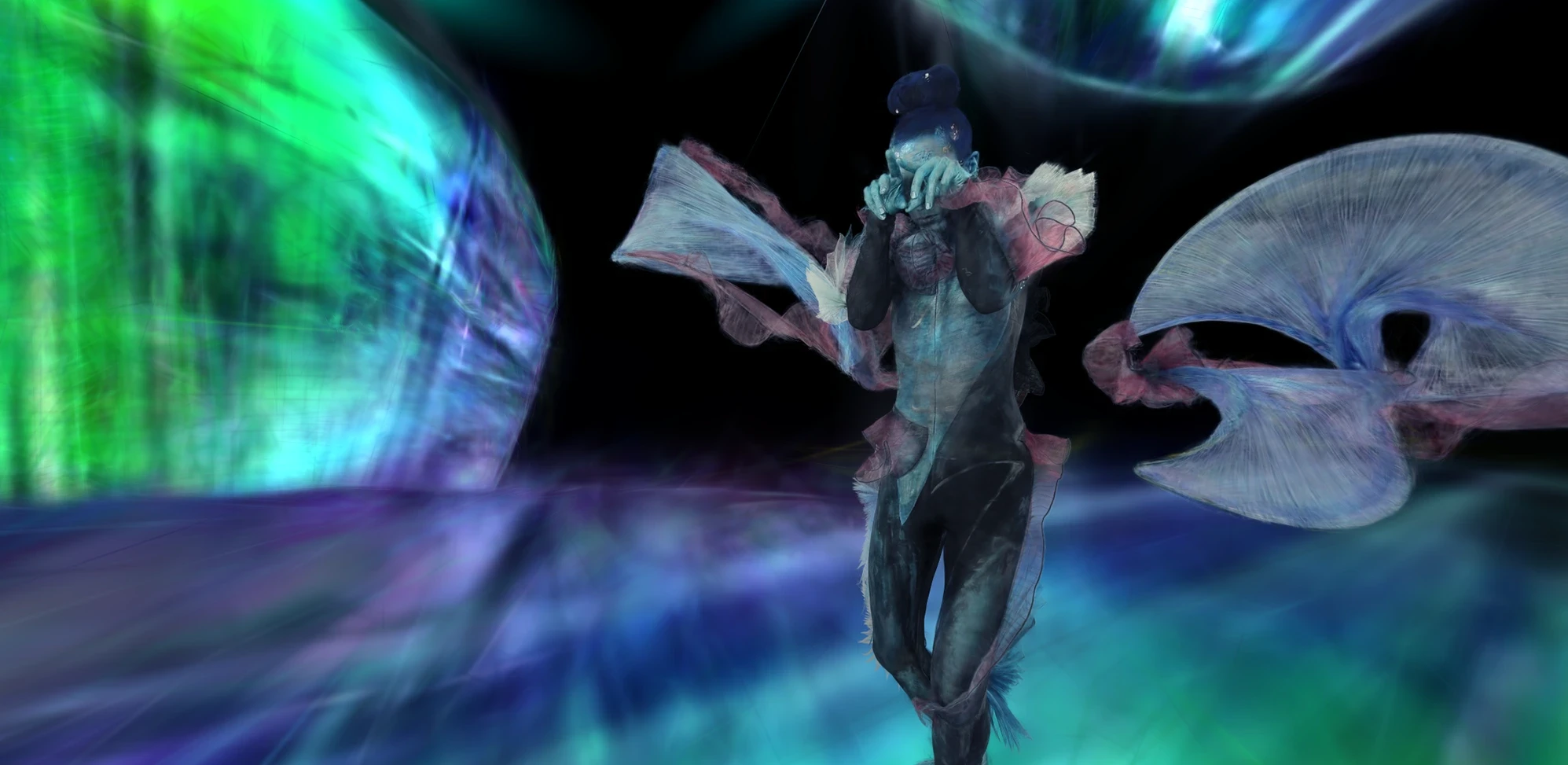

Gaussian Splatting, which we covered in an earlier article, represents a scene not as a polygon mesh but as millions of small mathematical “blobs”, each carrying information about colour, position and opacity. The "4D" extension adds the dimension of time: instead of capturing a single static moment, the system learns how those blobs move and deform across a sequence of frames. The output is a dynamic scene that can be rendered in real time and explored freely from any angle, which is what makes it suitable for VR.

For hair, fabric, translucent materials and reflections, Gaussian Splatting also has a natural advantage over mesh-based photogrammetry. These surfaces are notoriously difficult to reconstruct as clean geometry, but Gaussian primitives handle them well because they represent appearance rather than explicit surface structure.

Taking the NEO Out of Its Comfort Zone

KreativInstitut.OWL used a botspot NEO for the visual capture of Augmented Ubiquity. The NEO is built around 112 precisely aligned cameras that capture the human body simultaneously from every angle. In standard operation, it is used for high-resolution static photogrammetry: fashion and fit applications, medical body measurement, digital human production. The subject stands still; the system fires; the result is an accurate 3D model. That is what it is designed for.

To capture a live dance performance, the KreativInstitut.OWL team developed an entirely new workflow. As they describe it, they pushed the scanner out of its comfort zone. Instead of triggering a single simultaneous capture, they extended the system to record a sequence of multi-view frames across the duration of the performance. Each frame captures the dancer at a precise instant from all 112 positions at the same time. The full sequence of frames provides the temporal data that the 4DGS pipeline needs to learn motion and deformation. The static sculptural elements in the scene, which did not need this treatment, were captured using the NEO's standard workflow.

While Manuel Steitz of wunderkammer cooperating with the KreativInstitut.OWL team processed the dance footage through a 4D Gaussian Splatting pipeline to produce the final dynamic model, the three static sculptures were rendered as 3D Gaussian Splats to ensure visual consistency.

Why Camera Quality and Synchronisation Matter

One of the core challenges identified in the foundational 4DGS research is that large motions and imprecise camera data are among the main failure modes of the technology. Inaccurate camera positions, timing inconsistencies between frames, or insufficient coverage all degrade the training data and produce artefacts that cannot be removed in post-processing.

One challenge the team encountered was synchronisation: recording dynamic sequences across 112 cameras is a different problem from single-frame capture, and perfect sync across all positions in post-production is difficult to achieve. That the results hold up as well as they do is a reflection of how much the image quality and camera density of the NEO contribute even when the workflow is being pushed well beyond its original brief.

Camera density matters for similar reasons. A moving human body creates constantly changing occlusions: an arm crosses in front of a torso, fabric moves across limbs, a hand passes close to the face. With 112 positions distributed around the subject, almost every part of the body is directly observed from multiple angles at every moment. Sparser rigs would require the model to infer geometry it cannot directly see, which introduces uncertainty precisely in the areas where a dance performance is most active.

An Innovative Project from an Innovative Team

Augmented Ubiquity is more than a technical demonstration. The full artwork combines the volumetric visual capture with a spatial audio composition by Lou Kilger, designed from the outset to function from any listening position in three-dimensional space, and a dance performance by Ria Rehfuß developed specifically for the confined dimensions of the scanner booth. Dynamic binaural audio spatialization using SPAT Revolution adapts continuously to the viewer's head movements, giving the experience six full degrees of freedom in both vision and sound.

The result is currently on display at KIO, KreativInstitut.OWL's facility, with the team working toward a standalone VR headset release. You can read the full project description, including the creative and technical thinking behind every component, on the KreativInstitut.OWL project page.

What impresses us about this collaboration is the ambition the team brought to the problem. They did not set out to find a use case that fits within the documented capabilities of the hardware. They identified what they needed to create, worked out what the scanner would require to do, and built the workflow around it. That kind of approach is exactly what pushes the technology forward, and we are glad the NEO gave them a foundation solid enough to build on.

Looking Ahead

Projects like Augmented Ubiquity are a signal of where professional body scanning infrastructure is heading. The same qualities that make the NEO well-suited for high-accuracy static capture, precise synchronisation, dense multi-angle coverage, consistent image quality, turn out to be the qualities that next-generation dynamic capture workflows need most. As 4DGS and other volumetric formats continue to mature, we expect to see more workflows that treat a multi-camera body scanning rig as the starting point for capture pipelines its original brief never anticipated.

We would like to thank the entire KreativInstitut.OWL team for their creativity and for sharing the project with us. If you are working on a research or production project that requires professional-grade human digitisation, contact our team to discuss what the NEO can do for your pipeline.

5 Things Cheap 3D Body Scanners Can't Do (That Professional Ones Can)

360° Photography with the Momentum 2.0

botspot’s Automation Suite: Complete Control Over Your 3D Scanning Workflow

Top 3 Photogrammetry Software in Review

Making the Momentum 2.0